AI does not make everything better. Hand on heart: the following phenomena have become familiar to all of us, and we have either been irritated by them or, at the very least, wondered about them. Scrolling through LinkedIn reveals the same infographics and carousels over and over. All visually polished, all strikingly similar in content and layout. Optimised blocks of text, images with identical aesthetic signatures. Much of it looks appealing, yet feels strangely lifeless. The sense that something is not quite right with this content is difficult to shake.

And who has not encountered those emails that open with formulations rarely heard in European business correspondence before generative models entered everyday work. “I hope this email finds you well”, for instance, uttered in a tone that does not quite match the sender or the context.

These are just two examples of the ways in which we are confronted daily with the output of AI systems. The kind of output one notices, but grows accustomed to and eventually scrolls past. AI fatigue is real. The term describes a mix of mental tiredness, sensory overload, and cognitive exhaustion that emerges from the constant confrontation with AI and its results.

This article places the phenomenon in context. The underlying thesis: AI fatigue is predominantly framed as an individual problem. Those who feel exhausted are advised to take breaks, set boundaries, and, a further popular suggestion in this space, use AI more consciously.

Our view: these recommendations fall short, and the issue extends beyond individual discomfort. The real cause lies in a structural mismatch between the logic by which AI works and the logic by which organisations operate. For AI fatigue not to become a lasting condition and a genuine impairment, a different awareness is required, along with a recalibration of organisational structures.

TL;DR:

AI fatigue describes the exhaustion that arises from continuous exposure to AI. It is typically treated as an individual problem but is, in fact, structural. Research into technostress has described comparable patterns for more than forty years. What is new is the acceleration brought by AI. The strain becomes particularly acute where individuals have long been working with AI, while their organisations remain locked into routines of control. AI logic is iterative; many organisations operate according to a planning logic. This contradiction is the real driver of fatigue. Organisations that want to use AI sustainably must address five areas: treat psychological safety as a precondition, create transparency about the implications for roles and tasks, redesign workflows rather than simply roll out tools, build lasting competence, and shape the introduction of AI iteratively.

What is AI fatigue, and where does it come from?

AI fatigue has taken hold as a term in its own right. In studies, trade publications, and on platforms such as LinkedIn, it has been in circulation since 2023, used to describe a whole cluster of symptoms: mental exhaustion from continuous AI interaction, frustration with AI-generated content, evaluation pressure when reviewing AI outputs, the sense of being able to produce more thanks to AI whilst simultaneously being expected to produce more still.

The term may be young, but the phenomenon behind it is real and measurable. Viewed through a psychological lens, the term technostress, coined more than forty years ago, captures the burdens that information technology places on people in the workplace. The construct was operationalised in 2007 by Monideepa Tarafdar and colleagues in the widely cited study The Impact of Technostress on Role Stress and Productivity, published in the Journal of Management Information Systems [1]. The authors identified five stressors: techno-overload, techno-invasion, techno-complexity, techno-insecurity (concerning the workplace), and techno-uncertainty (concerning the technology itself).

A systematic review by Sapkota and colleagues, published in 2025, shows that AI amplifies these classical stressors and adds further factors [2]. Among them: the unpredictability of generative models, the risk of AI hallucinations, ethical conflicts in AI use, and the fear of gradually losing professional competence to artificial intelligence.

The authors systematically analysed sixty-six studies. Their conclusion: AI does not generate a new pathology, it produces a new intensity of a long-familiar phenomenon.

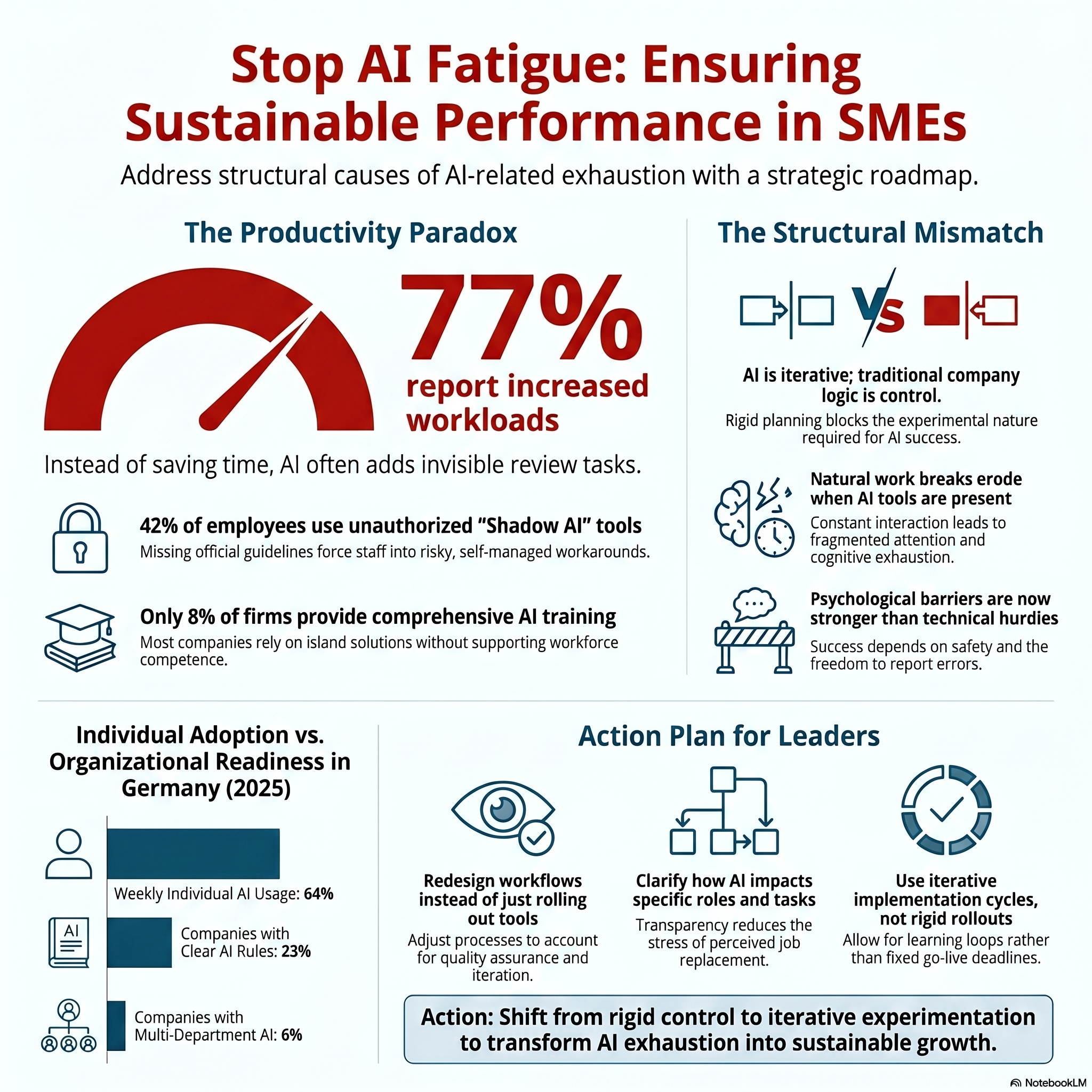

How powerful this acceleration has become is illustrated by several independent pieces of evidence. A survey by the labour marketplace Upwork from 2024, which already feels historical given the pace of AI development, found that seventy-seven per cent of AI-using employees reported that AI tools had increased their workload rather than reduced it [3].

An eight-month field study from the University of California, Berkeley, into working habits under AI adoption revealed that natural breaks in the working day systematically erode once AI tools are available [4]. We have already placed these observations in context in our article AI relieves pressure and simultaneously tempts overwork.

The central point for the argument that follows: AI fatigue does not arise because AI performs poorly. It arises because AI performs so well that the established conditions within organisations no longer suffice for this new form of knowledge work.

Are employees already ahead of their organisations?

A striking pattern emerges from the adoption data for AI in Germany. At the individual level, AI has arrived. At the organisational level, Germany continues to lag its European and international peers. And the gap between the two levels is widening rather than closing.

The BCG report AI at Work 2025 shows a weekly individual AI-usage rate of sixty-four per cent among surveyed knowledge workers in Germany. For comparison: in the United States, the figure stands at fifty-one per cent [5]. German employees therefore use AI individually not less frequently but more frequently than their American counterparts.

There are also encouraging signals at the organisational level. The Bitkom study Breakthrough in Artificial Intelligence, published in September 2025, reports that thirty-six per cent of German companies now use AI [6]. That is a doubling compared with 2024.

Equally important, however, are the following data points that may prove decisive for the question of AI fatigue:

- Only eight per cent of companies offer AI training to all employees. Forty-three per cent offer no training at all [6].

- The Institut der deutschen Wirtschaft (IW Cologne), in its 2025 report Artificial Intelligence as a Competitive Factor, notes that only six per cent of companies deploy AI across several business areas [7]. The remainder operates with isolated solutions.

We have examined the organisational challenges in greater depth in our article on the AI Transformation Matrix.

Viewed together, the picture appears almost paradoxical. A further Bitkom survey from October 2025, covering 604 companies, confirms the divergence: forty-two per cent of companies assume that their employees are using private AI tools at work. In eight per cent of cases, this practice is widespread, a doubling compared with 2024 [8]. Only twenty-three per cent of companies have any rules in place governing AI use, and only twenty-six per cent provide their employees with official access to generative AI.

The summary of the situation: employees in German companies frequently use AI intensively, often without official authorisation. Their employers have, often, not yet created the structural conditions for this use. Training is absent, clear guidelines are missing, and integrated processes have yet to be established.

This gap is a central driver of AI fatigue, though not the only one. Even where companies do provide official AI access, fatigue can arise if workflows remain unchanged, training stays superficial, or a culture of control blocks the iterative logic that AI requires.

Why does AI logic collide with the logic of many organisations?

Here, perhaps, lies the structural core of the problem. AI follows a different logic from the established processes of many organisations.

The logic of AI is iteration. Anyone who has worked with AI knows this only too well. Results are never perfect on the first attempt. Instead, a cycle unfolds: hypothesis, prompt, result, adjustment, renewed attempt. Each step is marked by uncertainty. The results are not deterministic. Quality emerges through repetition, variation, comparison, and the deliberate provision of context and reference material to the AI. In short: productive AI work is, to a significant extent, experimental work.

The logic of many organisations, by contrast, is shaped by an approach that might broadly be described as “control”. Planning, safeguarding, approval loops, documentation. Uncertainty is to be reduced as far as possible, not cultivated. Stability emerges through predictability. Productive work means work according to plan. Ambiguity is avoided wherever possible.

Both logics are, in themselves, entirely reasonable. Their coexistence, however, can generate friction if the different layers are not deliberately aligned. And this friction can itself contribute to AI fatigue.

The friction is not new. It is a familiar accompaniment to any innovation working its way into established structures. What is new is the speed with which it becomes visible in daily work once AI enters the picture. A survey commissioned by Infosys Topaz and conducted by MIT Technology Review Insights among 500 executives worldwide, published in December 2025, concludes that psychological barriers now act more powerfully than technical ones when it comes to the successful introduction of AI [9]. Eighty-three per cent of the executives surveyed stated that a culture of strong psychological safety measurably improves the success of AI initiatives.

The findings of that survey align with a scholarly tradition that is considerably older. The Harvard economist Amy Edmondson articulated the concept of psychological safety as early as 1999 in Administrative Science Quarterly [10]. In a field study of fifty-one teams, she showed that teams learn when their members can flag errors, ask questions, and introduce divergent perspectives without fearing social consequences. In her 2018 book The Fearless Organization, she extends this concept to entire organisations [11].

Taken together, the findings make clear that psychological safety no longer belongs in the category of soft cultural factors. It functions as a precondition: without it, AI initiatives do not take hold within the organisation and risk translating directly into personal exhaustion.

Why does this mismatch amplify AI fatigue?

Those who are expected to use AI every day whilst embedded in organisational structures not designed for iterative working may pay a cognitive price.

Three patterns surface repeatedly:

- Review burden without backing. AI produces results quickly. Responsibility for quality assurance, however, remains with the human user. When organisations fail to factor time for such review into their workflows, an invisible layer of additional work accumulates. The greater output feels productive. The exhaustion that arrives by evening is less easy to account for. Microsoft 365 telemetry from early 2025 documents that knowledge workers are interrupted, on average, every two minutes by meetings, emails, or chat notifications [12]. Into this already fragmented attention, AI arrives as an additional demand. Review, assessment, decision, correction.

- Shadow AI as avoidance. The Bitkom survey from October 2025 cited earlier shows that in forty-two per cent of German companies, private AI tools are used in the workplace [8]. This is not a sign of bad faith but a response to the absence of official routes. Those who resort to shadow AI bear responsibility not only for the results, but also for data protection, compliance, and interpretation. A lasting state of silent personal accountability generates cognitive load.

- Constant role-switching between execution and oversight. Coordinating parallel AI processes is management work, even when it is asked of employees in operational roles. Which instance needs input now? Where does a result need checking? Which task takes priority? This coordinating effort was rarely demanded in the pre-AI era and is systematically reflected in almost no job description. We have explored these new demands in our article AI makes us more productive, and that is precisely the danger.

All three patterns share a common root cause. The organisation expects the benefits of AI but has not yet recognised that new conditions must be created for those benefits to be realised without personal erosion.

What must organisations change to avoid AI fatigue?

If AI fatigue is indeed a structural problem, then individual countermeasures are likely to achieve only limited effect. Organisations that wish to work sustainably with AI face five tasks:

- Treat psychological safety as a precondition. This does not mean merely tolerating disagreement. It means deliberately acknowledging, analysing, and learning from mistakes in AI use. The MIT Insights/Infosys survey records that twenty-two per cent of executives admitted to having abandoned an AI project for fear of being blamed [9]. In organisations that deal openly with setbacks, such blockages arise less often.

- Create transparency about the implications for roles and tasks. In the same survey, sixty per cent of executives identified clarity about the concrete impact of AI on roles and tasks as the most important lever for psychological safety [9]. Those who do not know whether their work will be complemented or replaced by AI will be inhibited in working with it. Providing this clarity is a leadership responsibility, not an HR task. Its absence generates chronic stress that may equally manifest as fatigue.

- Redesign workflows rather than simply roll out new tools. McKinsey’s State of AI study from November 2025 identifies the deliberate redesign of working processes as the single strongest factor for measurable EBIT contribution from AI [13]. Companies that fail to adapt their processes tend to generate additional work through AI rather than relief. We have examined this point in depth in our article Change Management, the underestimated success factor in AI implementation. Without redesign, AI fatigue becomes the logical consequence.

- Build competence that lasts. Training alone is not enough. What employees need is the confidence to integrate AI into their specific professional context. This reflects the finding of the RBC/Munk School study on the Imagination Gap from 2025. Those who know their own field can use AI meaningfully. Equally important is cultivating awareness that AI tempts users to stray into fields beyond their expertise. The perceived omnipotence of AI and the outputs it produces still need to be interpreted and verified. This closes the circle back to review competence.

- Shape the introduction of AI iteratively. Those who introduce AI as though it were a classical IT project reproduce the mismatch between organisational and AI logic at the meta level. A major rollout with a fixed go-live date, detailed specifications, and binding upfront planning does not suit a technology whose value emerges only through testing. More sensible are manageable introduction cycles with clearly defined use cases, explicit learning loops, and the deliberate permission to let insights from the first cycle shape the design of the second. This takes pressure out of the system and reduces the exhaustion that arises when employees are expected to make a rigidly planned initiative work through iteration anyway.

The five levers are interconnected. Building psychological safety whilst leaving workflows untouched improves the surface climate without addressing AI fatigue, which in turn can erode that very climate through the back door. Redesigning workflows without being transparent about the effect on roles and tasks equally generates resistance. Building the necessary competence without allowing an iterative way of working ultimately produces frustration.

What SMEs and family businesses can take away

For SMEs and family businesses, what matters are approaches that work in practice, with indicators that can be measured practically as well. Viewed from this perspective, AI fatigue is a signal, not an inevitable fate. Where exhaustion becomes visible among employees working with AI, the cause lies, in all but the rarest cases, not with the individuals themselves. It lies in the conditions that can be refined.

SMEs and family businesses hold advantages that large enterprises do not possess to the same degree. Shorter decision-making paths, flatter hierarchies, direct contact between leadership and delivery. These very advantages help when structures need to be reshaped. AI implementation does not work as a software rollout. It works as cultural work with a pronounced technological component.

The very decisiveness that characterises SMEs can, however, prove a double-edged sword. Speed without systematic preparation leads to activity: many tools, little impact, growing frustration. The benefits of speed materialise only when it is coupled with clarity about objectives, roles, and processes. The levers outlined above offer initial points of reference for a pragmatic approach to AI implementation.

Sources

[1] Tarafdar, M., Tu, Q., Ragu-Nathan, B. S., & Ragu-Nathan, T. S. (2007). The Impact of Technostress on Role Stress and Productivity. Journal of Management Information Systems, 24(1), 301–328. https://doi.org/10.2753/MIS0742-1222240109

[2] Sapkota, N., Makkonen, M., Pirkkalainen, H., & Salo, M. (2025). From Technostressors to AI-Stressors: A Systematic Literature Review. CEUR-WS Vol-4134. https://ceur-ws.org/Vol-4134/

[3] Upwork Research Institute (2024). From Burnout to Balance: AI-Enhanced Work Models for the Future. https://www.upwork.com/research/ai-enhanced-work-models

[4] Ranganathan, A., & Ye, X. M. (2025). AI Doesn’t Reduce Work, It Intensifies It. Harvard Business Review.

[5] Boston Consulting Group (2025). AI at Work 2025: Momentum Builds, but Gaps Remain. https://www.bcg.com/publications/2025/ai-at-work-momentum-builds-but-gaps-remain

[6] Bitkom (2025). Breakthrough in Artificial Intelligence. https://www.bitkom.org/Presse/Presseinformation/Durchbruch-Kuenstliche-Intelligenz

[7] Institut der deutschen Wirtschaft (2025). Artificial Intelligence as a Competitive Factor. https://www.iwkoeln.de/fileadmin/user_upload/Studien/Report/PDF/2025/IW-Report_2025-KI-als-Wettbewerbsfaktor.pdf

[8] Bitkom (2025). Employees Increasingly Using Shadow AI. Representative survey of 604 companies in Germany with 20 or more employees. https://www.bitkom.org/Presse/Presseinformation/Beschaeftigte-nutzen-Schatten-KI

[9] MIT Technology Review Insights in Partnership with Infosys Topaz (2025). Creating Psychological Safety in the AI Era. https://www.technologyreview.com/2025/12/16/1125899/creating-psychological-safety-in-the-ai-era/

[10] Edmondson, A. C. (1999). Psychological Safety and Learning Behavior in Work Teams. Administrative Science Quarterly, 44(2), 350–383. https://doi.org/10.2307/2666999

[11] Edmondson, A. C. (2018). The Fearless Organization: Creating Psychological Safety in the Workplace for Learning, Innovation, and Growth. Wiley.

[12] Microsoft (2025). Breaking Down the Infinite Workday. Work Trend Index Special Report. https://www.microsoft.com/en-us/worklab/work-trend-index/breaking-down-infinite-workday

[13] McKinsey & Company (2025). The State of AI: Agents, Innovation, and Transformation. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

Would you like to strategically implement AI in your daily business operations?

The question is: What does this look like in your company? Do your employees have the competencies they need for this new work reality? Or are AI integration and competency development currently happening side by side – without systematic connection?

In a no-obligation strategy session, we would be happy to introduce you to the NordAGI approach.