In the first part of this series, we examined why AI governance is a leadership responsibility [AI governance starts in the boardroom]. This article addresses the follow-on question: how does one structure AI governance in practice? Three complementary frameworks, each fulfil a different function. Before we introduce them, it is worth considering where AI governance is actually situated within an organization.

TL;DR:

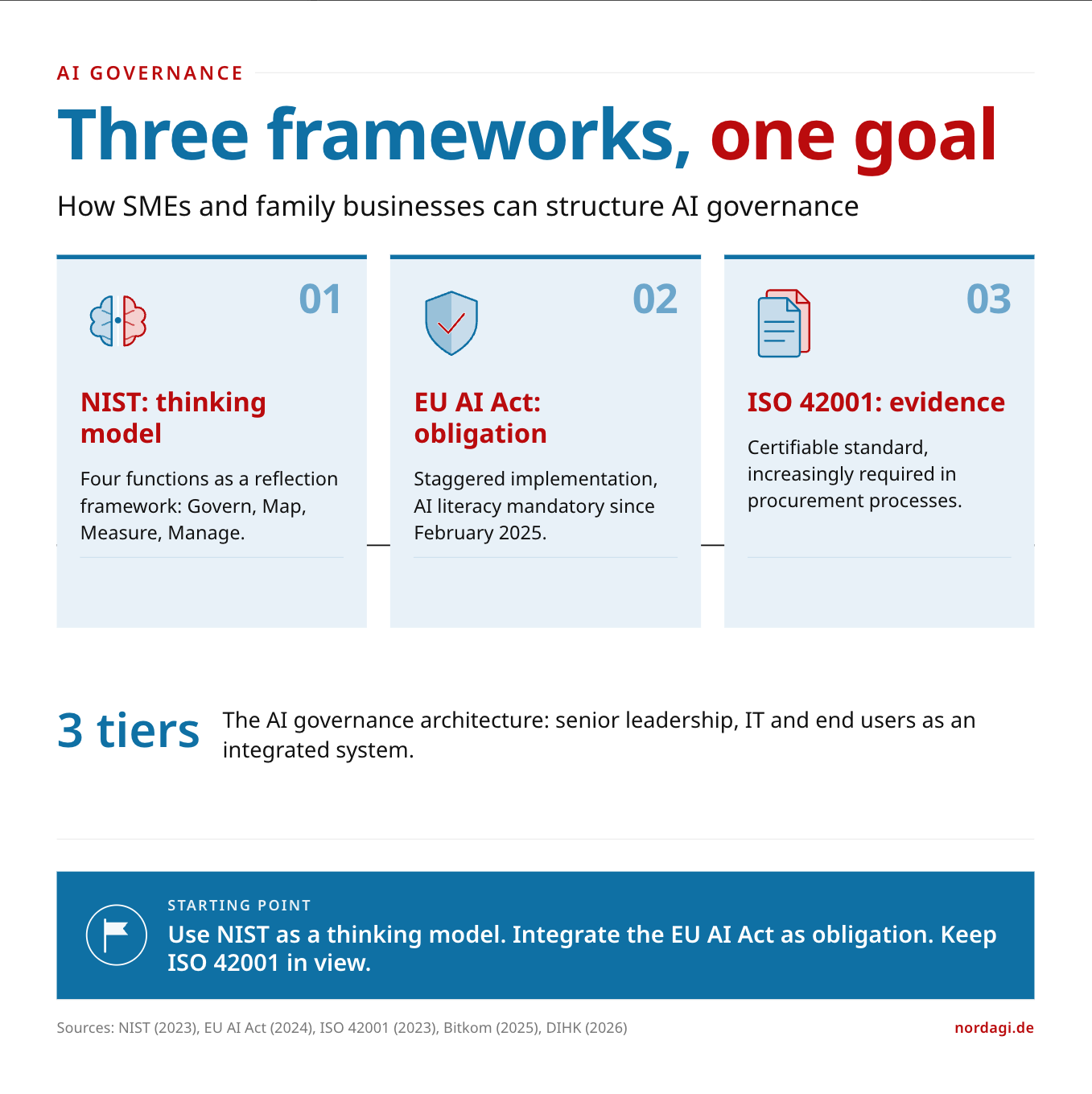

AI governance is not a monolithic project, but an architecture with three tiers: senior leadership, IT, and end users. Three frameworks support implementation. The NIST AI Risk Management Framework as a thinking model, the EU AI Act as a regulatory framework, and ISO/IEC 42001 as a certifiable standard. The critical point is that governance does not only minimize risks systematically. It also creates the framework within which AI opportunities can be pursued in a structured way.

What is the AI governance architecture?

AI governance is not a single topic that can be assigned to one department alone. It is distributed across three tiers within the organization, each with distinct responsibilities that need to work in concert.

At the senior leadership tier, strategy, and steering take precedence. The managing director weighs risks, but equally considers which opportunities should be systematically pursued through AI. Senior leadership defines the ethical guardrails, assigns accountabilities, and ensures that AI governance forms part of the broader governance landscape and is embedded within the organization’s strategy. [AI governance starts in the boardroom]

The IT tier encompasses organisation-wide coordination, IT security, and support for end users. The IT department not only provides the infrastructure, but also manages access rights, ensures data protection compliance, and supports employees in using AI systems safely. It serves as the bridge between strategic direction and operational use.

At the end-user tier, the actual work with AI takes place. Employees use AI systems to optimize existing processes or build new ones. They document their results, identify sensible automation opportunities, and in doing so, frequently discover use cases that had not yet been considered at strategic level. This is often where the highest value contribution emerges, including when existing processes and their continued relevance are called into question.

Three cross-cutting themes as connectors

Empowerment: All three tiers require the competence and the authority to use AI effectively and safely.

Ethical principles: These apply equally at every tier, from strategic decisions through to individual applications.

Opportunities and risks: Governance does not only mean controlling risks. It also creates the framework within which an organization can systematically identify and capitalize on the opportunities associated with AI, including those that are not yet visible today. This is sometimes referred to as the Imagination Gap: the distance between what AI could deliver and what organizations currently envision. [Imagination Gap]

This AI governance architecture describes how an organization is positioned. The frameworks we introduce below are the tools with which this architecture can be populated.

Why is there no single framework that covers everything?

AI governance touches on multiple dimensions: risk and opportunity management, regulatory compliance, and organizational steering. No single framework covers all three comprehensively. Since the frameworks work complementary, this is not a shortcoming.

A comparison helps:

NIST provides the thinking model (how do we reason in a structured way about the risks and opportunities associated with AI deployment?).

EU AI Act provides the regulatory obligations (what must we do?).

ISO 42001 provides the management system (how do we demonstrate that we are doing it?).

For SMEs and family businesses, this means: organizations do not need to implement all three frameworks simultaneously. But they should understand what each framework delivers and where it fits within their governance architecture.

What does the NIST AI Risk Management Framework comprise?

The NIST AI Risk Management Framework 1.0 was published in January 2023 by the US National Institute of Standards and Technology [1]. It is voluntary, non-prescriptive, and designed to be applicable across sectors. In the United States, it has established itself as the de facto standard for responsible AI deployment.

The framework is built around four core functions that form a continuous cycle.

Govern establishes the foundation. Who makes AI-related decisions within the organization? What values guide those decisions? How does accountability flow? If this foundation is weak, everything built upon it will not function.

Map creates transparency. Who are the stakeholders of the AI systems? Not only the users, but also those affected by the outputs. What is the full lifecycle of an AI application? Where does the organization’s risk tolerance lie? The core principle: what has not been mapped cannot be steered.

Measure evaluates the risks. Not only technically, but also qualitatively. Continuous testing, validation under real-world conditions, scenario analysis. An important consideration: examining metrics alone does not guard against blind spots. The framework therefore deliberately supplements quantitative measurement with qualitative assessment.

Manage prioritizes and acts. Risks are mitigated, accepted, transferred, or avoided. This is where strategy meets execution.

The cycle then begins again. Each iteration deepens the understanding of the organization’s own AI landscape and simultaneously sharpens the view of where new opportunities are emerging.

What makes NIST relevant for SMEs in the DACH region?

The value of NIST lies in its thinking structure. The four core functions provide a checklist that even a smaller or mid-sized organization can use. Not as a formal audit, but as a framework for reflection: have we defined governance? Have we mapped our AI landscape? Are we measuring what we intend to steer? Are we acting on the results?

NIST also published a supplementary profile for generative AI in July 2024 (NIST AI 600-1) [1]. This is particularly relevant, as most AI applications for SMEs and family businesses fall precisely into this category: generative text processing, analysis tools, or AI-powered assistants.

What does the EU AI Act require?

The EU AI Act (Regulation (EU) 2024/1689) has been in force since August 2024. Unlike NIST, it is not voluntary guidance but binding law. Implementation follows a staggered timeline [2].

Three dates are relevant for SMEs and family businesses:

Since February 2025, two obligations have applied. First: certain AI practices are prohibited, including social scoring and manipulative AI systems. Second: Article 4 requires AI literacy. Organizations that deploy AI must ensure that their employees possess sufficient AI competence [3]. This obligation is already in effect. Enforcement begins in August 2026.

From August 2026, the regulation becomes generally applicable. From this point, sanctions may be imposed: up to 35 million euros or 7 per cent of global annual turnover, whichever is higher.

And in August 2027, the requirements for high-risk AI systems apply in full: comprehensive documentation, risk management, and conformity assessment.

What risk tiers does the EU AI Act distinguish?

The EU AI Act distinguishes four risk tiers. Most AI applications in SMEs and family businesses fall into the two lowest categories.

Unacceptable risk covers AI practices that are fundamentally prohibited: social scoring, subliminal manipulation, real-time remote biometric identification in public spaces. For most SMEs, this category is not directly relevant, but it marks the boundary of what the legislator considers intolerable.

High risk is less common in SMEs and family businesses: AI for final credit decisions, recruitment systems without human review, safety-critical applications. Comprehensive documentation and oversight obligations apply here.

Limited risk applies, for example, to AI chatbots in customer interactions or pre-screening systems for job applications where the final decision remains with a human. Users must be informed that they are interacting with an AI system.

Minimal risk covers the majority of common AI tools: generative text processing, AI for accounting, email classification. Requirements are limited to transparency notices.

The key takeaway: most SMEs and family businesses are unlikely to need a major compliance programme, but they do need a clear understanding of where their AI applications sit within this classification, to establish a documented baseline structure.

Why does Article 4 deserve particular attention?

Article 4 is the most frequently underestimated obligation in the EU AI Act. It requires all organizations that use or provide AI to ensure that their employees are sufficiently AI-competent. This sounds soft, but it carries concrete consequences.

According to Bitkom, 53 per cent of German businesses cite a lack of competence as the greatest barrier to AI adoption [4]. This is not merely a productivity issue; it can also become a compliance risk.

Viewed from the other side, there is also an opportunity here: organizations that invest early in AI competence do not only meet the regulatory requirement. They create the conditions for AI to be used productively in daily operations, and for employees to recognize opportunities that were not yet visible at the strategic level.

The good news: the requirements are flexible. There is no prescribed curriculum. The European Commission has explicitly stated that measures should be context-dependent [3]. For an SME or family business, this could mean: documented internal training, regular awareness-raising, and clear usage guidelines.

Why is ISO/IEC 42001 becoming increasingly important?

ISO/IEC 42001 was published in December 2023 and is the first international standard for AI management systems [5]. It is the AI equivalent of ISO 27001 for information security: certifiable, auditable, and increasingly required as evidence of governance maturity.

For SMEs and family businesses, ISO 42001 is relevant for a specific reason: large organizations are beginning to require certification within their procurement processes. Those operating as suppliers or service providers to larger clients may, in the medium term, face the question whether they can demonstrate ISO 42001 conformity.

This is not solely a compliance matter. It is also an opportunity for differentiation. Those who can demonstrate early that AI governance is being implemented in a structured way gain an advantage with business partners and end customers, who increasingly value responsible AI practices.

This does not mean that every SME must immediately pursue certification. But it does mean that the structures ISO 42001 requires represent sound governance foundations: defined responsibilities, documented processes, regular review, and stakeholder engagement.

How do the three frameworks connect?

NIST provides the conceptual foundation. The four functions (Govern, Map, Measure, Manage) structure thinking about AI risks and AI opportunities in equal measure. This is the starting point.

The EU AI Act translates part of this thinking structure into binding obligations. Those who use NIST as an orientation will find that many EU AI Act requirements are already covered. The DIHK (Association of German Chambers of Industry and Commerce) noted in its position paper on the German implementing legislation (KI-MIG) that the supervisory structures remain opaque and complex [6]. An organization’s own governance foundation helps to navigate this complexity.

ISO 42001 formalizes the whole into an auditable management system. It is the step from “we have governance” to “we can demonstrate governance.”

For an SME or family business, a pragmatic path might look like this: use NIST as a thinking model to build the organization’s own AI governance architecture. Integrate the EU AI Act requirements as a binding minimum standard. Keep ISO 42001 in view as a longer-term orientation, particularly if the client base is moving towards requiring certification evidence.

Where to start?

The most important measure is a stock take: which AI systems are in use? What risk category do they fall into? Who is accountable for their use? And equally significant: where do the greatest potential gains lie, whether through more productive processes or entirely new ones that create additional value? This stock take corresponds to the “Map” function of NIST and simultaneously forms the basis for classification under the EU AI Act.

The second step concerns AI literacy under Article 4. Document which training and awareness measures are in place. Not as a bureaucratic obligation, but as the foundation for competent AI use within the organization.

The third step is a governance setup: a named responsible person, documented usage guidelines, and regular review. This need not be a vast compliance programme. It must be a functioning and robust framework that addresses risks whilst also creating space for new possibilities.

In the third part of this series, we examine where the hidden governance gaps lie: in AI that employees are already using without the organization’s knowledge, and in AI embedded within the SaaS landscape that has never been inventoried.

Sources:

[1] NIST AI Risk Management Framework 1.0 (AI RMF), published 26 January 2023. NIST AI 600-1 (Generative AI Profile), published July 2024. URL: https://www.nist.gov/itl/ai-risk-management-framework

[2] EU AI Act (Regulation (EU) 2024/1689), in force since 1 August 2024. URL: https://artificialintelligenceact.eu/implementation-timeline

[3] European Commission, AI Literacy Q&A. URL: https://digital-strategy.ec.europa.eu/en/faqs/ai-literacy-questions-answers

[4] Bitkom, Artificial Intelligence 2025, published February 2026. Survey of 604 organizations with 20 or more employees. URL: https://www.bitkom.org/Bitkom/Publikationen/Kuenstliche-Intelligenz-in-Deutschland

[5] ISO/IEC 42001:2023, Artificial Intelligence Management System. URL: https://www.iso.org/standard/81230.html

[6] DIHK position paper on the KI-MIG, March 2026. The DIHK criticizes that the market surveillance structures are in parts opaque and difficult to follow.

This article is part 2 of the series “AI Governance for SMEs and Family Businesses.” Part 1 covers leadership accountability, part 3 examines the hidden risks of shadow AI, vendor AI, and agentic AI.

Would you like to strategically implement AI in your daily business operations?

The question is: What does this look like in your company? Do your employees have the competencies they need for this new work reality? Or are AI integration and competency development currently happening side by side – without systematic connection?

In a no-obligation strategy session, we would be happy to introduce you to the NordAGI approach.