According to Bitkom, the proportion of German businesses actively using AI has more than doubled within a single year, reaching 41 per cent [1]. At the same time, a 2026 survey by the Association of German Chambers of Industry and Commerce (DIHK) found that 59 per cent of businesses cite legal uncertainty as a central challenge in AI adoption [2]. These figures point not only to accelerating AI uptake, but also to a potential governance gap. Could it be that the structures intended to govern AI use are growing more slowly than the use itself? Who ultimately bears the responsibility for AI deployment? The IT department or senior leadership? The straightforward answer: both, but in different roles.

TL;DR:

AI governance is already a leadership responsibility. IT carries the operational responsibility for infrastructure and security. But the strategic decisions, including risk tolerance, accountability structures, and the capacity for sound judgement, sit with senior leadership. This article examines where blind spots emerge, why governance must extend beyond an organisation's own tools, and which competencies business leaders need to develop.

Why is AI governance not a task confined to the IT department?

It seems straightforward: AI is ultimately “just software” and therefore the IT department is responsible. This assumption is a double-edged sword because it conflates operational delivery and the associated accountability (IT) with strategic responsibility (C-Suite / senior leadership).

The IT department can provide technical infrastructure, administer tools, and implement security standards. But AI governance also requires decisions that extend well beyond technology: what level of risk is the organisation prepared to accept? Which processes may be automated, and which may not? Who oversees, and who is accountable for, the outputs generated by an AI system? These questions go beyond the scope of operational delivery. They are, in the end, leadership decisions.

Context for this comes from Kate Kellogg, professor at MIT Sloan, whose research into agentic AI projects produced a telling finding: in a clinical AI deployment, 80 per cent of the effort was not directed at the technology implementation itself, but at stakeholder alignment, governance, and workflow integration [3]. To return to the question of responsibility: defining usage policies, building accountability structures, and securing organisational buy-in are not IT tasks. They are leadership tasks.

Where are the blind spots for senior leadership?

If AI governance is a leadership responsibility, how is it actually implemented within the organisation? This is where a structural blind spot in task ownership can emerge.

To make sound strategic AI decisions, business leaders need to be equipped to do so. Not through technical expertise, but through decision-making competence: those responsible for AI governance must be able to assess what AI systems can deliver, where their limitations lie, and what risks their use entails. Ideally, senior leaders should bring first-hand experience of working with AI systems, to gauge the implications of their own decisions.

In practice, AI-related decisions within SMEs and family businesses are often concentrated with a single individual, frequently the managing director. This is understandable. But it raises a question: does that person have the complete picture? Do they know which AI systems are in use across the organisation? Are they aware of the limitations of those systems? Can they see where employees are using AI tools without any documentation?

A duality emerges here that is characteristic of smaller and mid-sized organisations. The prevailing way of working is perceived as pragmatic and effective. Decisions are taken quickly, and the business runs. Viewed from the outside, however, by customers, business partners, or during a due diligence review, the same approach may be perceived as a lack of governance. These are two sides of the same coin: things work operationally, but the documented structure that makes this functioning verifiable and transferable, and that creates a binding framework, is absent.

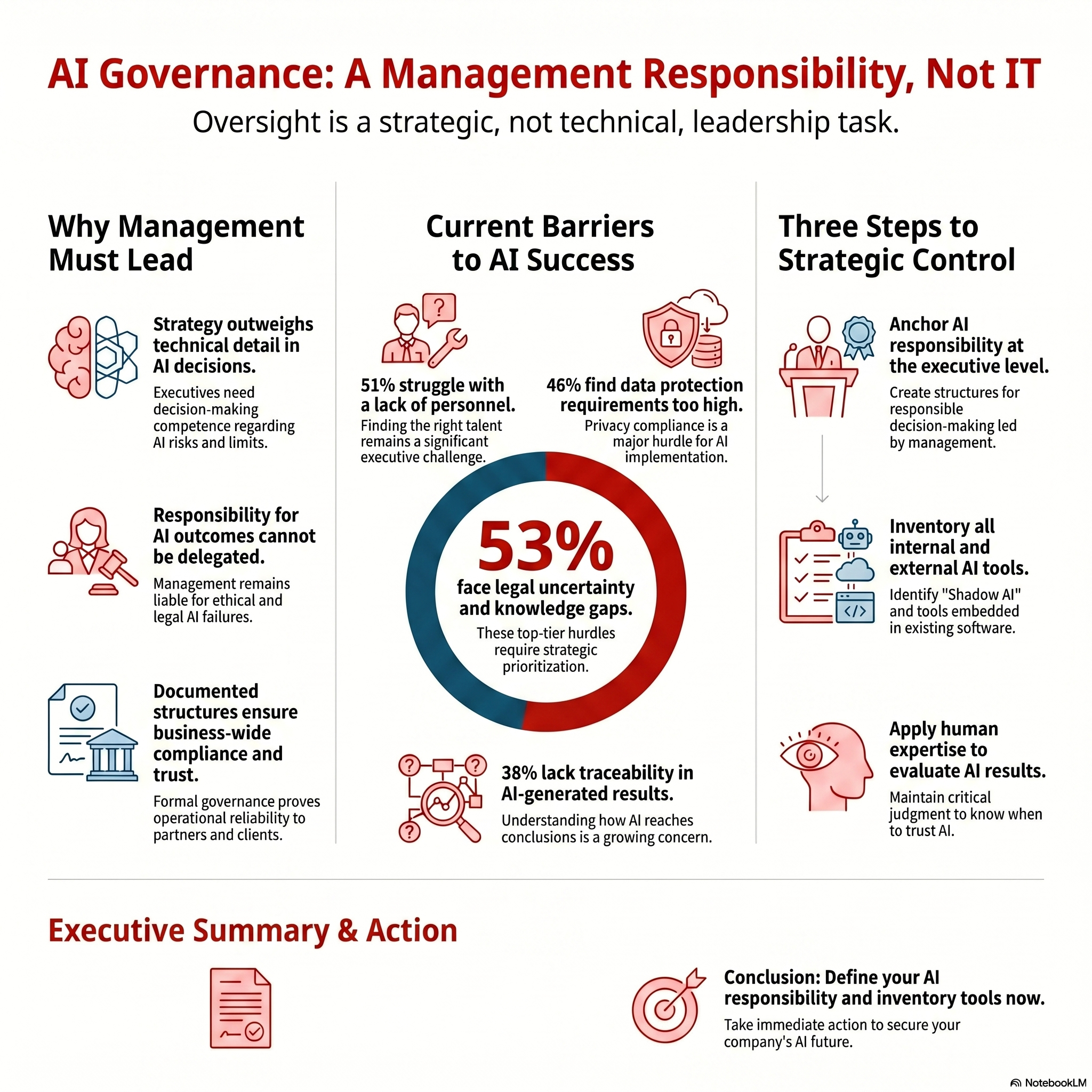

There is more to consider. The barriers to AI adoption are varied and extend well beyond technology. A 2025 Bitkom study paints a differentiated picture. 53 per cent of the organisations surveyed cite uncertainty around legal requirements as the greatest barrier. An equal 53 per cent name a lack of technical expertise. 51 per cent point to insufficient staff resources, 48 per cent to the high demands of data protection, and 38 per cent to the difficulty of tracing and verifying AI-generated outputs [4]. These are no longer purely technical problems. They are challenges that require strategic decisions, prioritisation, and resource allocation. And that places them squarely within the remit of senior leadership.

How far does AI governance need to reach?

AI governance does not only concern the obvious AI tools that an organisation procures and deploys. In practice, it often extends much further: into the systems of service providers, SaaS vendors, and suppliers. The question is: who shares accountability for the consequences when an HR platform, a CRM system, or accounting software used within the organisation deploys AI functions?

When AI-driven decisions are made on behalf of the organisation, they remain the organisation’s decisions. Regardless of whether the underlying technology is operated internally or externally. [AI decisions in business: Why humans must remain the final authority]

When it becomes public that an organisation has relied on AI-driven decisions that turn out to be flawed, discriminatory, or otherwise ethically questionable, the reputational damage is immediate. Customers, applicants, and business partners are already sensitised to these issues.

The Workday case illustrates how quickly such a risk can materialise. The US-based company offers AI-powered applicant screening software used by numerous employers. An applicant who had submitted over 100 applications, each time rejected, sometimes within minutes, filed a lawsuit alleging systematic age discrimination. In May 2025, a US court granted preliminary class certification [5]. The companies using Workday had assumed the vendor would ensure compliance. The court is now examining whether Workday acted as an “agent” of the employers, which could mean that both the vendor and the client organisations share liability.

Although this case is situated within the US legal system, the fundamental question remains the same: who monitors the AI outputs? Who ensures that decisions are consistent with the organisation’s own ethical standards? That responsibility cannot be delegated.

How does AI change leadership responsibilities, and what does this mean for SMEs?

Beyond the challenges of compliance and risk management, AI creates additional leadership demands. Generative AI can produce knowledge at a quality and speed that would have been unimaginable not long ago. Texts, analyses, and strategic options emerge in seconds. What is easily overlooked: this knowledge is increasingly detached from experience and context.

AI primarily replaces explicit, formal knowledge: the kind that can be learnt and passed on. What it does not replace is tacit knowledge, the experiential understanding that comes into play when there is no clear-cut answer, precisely because not all boundary conditions have been established. Managing this tacit knowledge is becoming a central leadership competence.

When, thanks to AI, answers are available at any time, the consequence is that the person with the most knowledge is no longer the most important in the room. Rather, it is the person best able to judge how that knowledge should be interpreted and applied. There is a shift from factual knowledge towards judgement, evaluation, and the decision-making competence that arises from them. [AI as a sparring partner: How business leaders gain a genuine counterpart]

This shift has direct governance implications. If AI outputs cannot be treated as automatically trustworthy, the organisation needs structures that define at which points human review of results is mandatory. Structures that determine which AI recommendations are rejected or confirmed, along with clear documentation. Although this may sound abstract at first, these are the foundations of functioning AI governance.

To ensure the implementation of these governance requirements, senior leadership must preserve its capacity to act. Three areas are in focus.

First: structure accountability rather than delegate it. AI governance must be anchored at senior leadership level. This does not mean that the managing director controls every detail. It means creating the structures that lead to responsible decision-making. In SMEs and family businesses with 50 to 250 employees, this is often the managing director personally.

Second: establish visibility. Many organisations do not know which AI systems are already in use. Employees use AI tools through personal accounts, SaaS products contain embedded AI functions that have never been inventoried. The first governance step is therefore a stock take: which AI systems are in use? For what purposes? Who is accountable for the outputs? [AI resilience]

Third: the established principle that judgement and the evaluation of outcomes constitute a leadership competence is extended when it comes to the critical assessment of AI-generated results. This does not require technical expertise, but the ability to ask the right questions. When can an AI recommendation be relied upon? When can it not? At which points is additional human expertise needed to reach a conclusive assessment?

What is the regulatory picture?

In addition to national requirements, the EU AI Act warrants close attention. Since February 2025, Article 4 has been in effect: organisations that deploy AI must ensure that their employees possess sufficient AI competence [6]. From August 2026, enforcement mechanisms will apply, accompanied by a substantial sanctions framework.

For most AI applications within SMEs and family businesses, the requirements are moderate: transparency, documentation, human oversight. But even moderate requirements need someone who is accountable for them and who ensures they are implemented.

In the second part of this series, we examine the specific frameworks that support a structured approach: the NIST AI Risk Management Framework, the EU AI Act, and ISO/IEC 42001. Not as a theoretical exercise, but as practical orientation for SMEs and family businesses.

Sources:

[1] Bitkom, Artificial Intelligence in Germany, 2026 report, based on 604 organisations with 20 or more employees. URL: https://www.bitkom.org/Bitkom/Publikationen/Kuenstliche-Intelligenz-in-Deutschland

[2] DIHK Digitalisation Survey 2026, approximately 5,000 organisations across all sectors. URL: https://www.dihk.de/de/themen-und-positionen/wirtschaft-digital/digitalisierung/dihk-digitalisierungsumfrage-2026

[3] Kellogg, K. et al. (2025), cited in: MIT Sloan, “Agentic AI, explained,” February 2026. URL: https://mitsloan.mit.edu/ideas-made-to-matter/agentic-ai-explained

[4] Bitkom, Artificial Intelligence 2025, published February 2026. Survey of 604 organisations with 20 or more employees (fieldwork calendar weeks 27-32, 2025). URL: https://www.bitkom.org/Bitkom/Publikationen/Kuenstliche-Intelligenz-in-Deutschland

[5] Mobley v. Workday, Inc., N.D. Cal. Case No. 23-cv-00770-RFL. Preliminary class certification granted 16 May 2025. URL: https://www.courtlistener.com/docket/66831340/mobley-v-workday-inc/

[6] EU AI Act, Regulation (EU) 2024/1689, Article 4: AI Literacy. URL: https://artificialintelligenceact.eu/article/4/

This article is part 1 of the series “AI Governance for SMEs and Family Businesses.” Part 2 covers the relevant frameworks (NIST, EU AI Act, ISO 42001), part 3 examines the hidden risks of shadow AI, vendor AI, and agentic AI.

Would you like to strategically implement AI in your daily business operations?

The question is: What does this look like in your company? Do your employees have the competencies they need for this new work reality? Or are AI integration and competency development currently happening side by side – without systematic connection?

In a no-obligation strategy session, we would be happy to introduce you to the NordAGI approach.

AI Governance Frameworks: NIST, EU AI Act, ISO 42001 for SMEs

[…] the first part of this series, we examined why AI governance is a leadership responsibility [AI governance starts in the boardroom]. This article addresses the follow-on question: how does one structure AI governance in practice? […]