Every day brings fresh AI announcements. Claude can now do this, ChatGPT can now do that. Organisations launch pilot projects, test agents, automate tasks or entire processes. And yet, for most, the central question remains unanswered: why does none of this scale? This article explores the idea that the answer lies not in the technology, but in the organisation that deploys it.

TL;DR:

41 per cent of German companies now use AI, double the figure from a year ago. At the same time, two thirds of all organisations remain stuck in experimentation mode, never progressing beyond pilot projects. Technology is not the obstacle. What is missing is the willingness to treat AI as an organisational challenge rather than a technical project. The organisations that derive the greatest value from AI do something fundamentally different: they do not acquire more tools. They transform their organisation first.

Why do so many AI pilot projects reach a dead end?

The pattern is familiar to anyone working with AI in a business context. Everyone is talking about AI. The IT department receives the brief to “do something with AI.” The first pilot project materialises, perhaps a customer service chatbot or an AI-assisted proposal generator. Early results look promising. Adjustments are made. And then, quite suddenly, progress stalls.

The pilot remains a pilot. Perhaps because the chosen use case was never particularly scalable to begin with. Six months later, the uncomfortable questions surface: what actually became of the AI project, and where is the return on investment?

This is not an isolated phenomenon. Bitkom’s 2026 study captures the dynamics in numbers: 41 per cent of German companies now deploy AI, more than double the previous year’s figure. Paradoxically, a further finding from the same study reveals that 62 per cent of these AI-using companies classify themselves as laggards [1]. How does one reconcile the apparent contradiction? By recognising that the approach itself may be the wrong way round.

The global picture confirms the pattern. McKinsey’s State of AI Report 2025, drawing on responses from nearly 2,000 organisations across more than 100 countries, shows that 88 per cent of all companies use AI in at least one function. Yet only around one third have managed to scale AI beyond individual experiments or pilots [2]. Two thirds remain trapped in what the literature terms “pilot purgatory.”

Gartner forecasts that by the end of 2026, approximately 60 per cent of AI projects will be abandoned where the necessary prerequisites are absent, whether that is a suitable data foundation, a clearly defined and value-creating use case, or both [3]. AI agent systems, capable of executing multi-step tasks autonomously, are equally affected: Gartner expects more than 40 per cent of these projects to be discontinued by the end of 2027 [4].

The question that, in our view, demands attention first: why do so many pilot projects fail when the technology demonstrably works?

The question that, in our view, demands attention first: why do so many pilot projects fail when the technology demonstrably works?

Why do tools alone change nothing?

In our advisory work, we observe a recurring pattern. Most organisations have already gained initial AI experience. They have tested tools, experimented with prompts, and perhaps even automated a process or two. What they lack, and what prevents AI from scaling across the organisation, is a plan for how AI moves from isolated experiments to an organisation-wide resource and capability.

To make this concrete: I have experienced this firsthand. When I began integrating AI systematically into my own workflows, my personal productivity rose noticeably. Stronger first drafts, faster research with more differentiated results, clearer structures.

My organisation did not benefit initially. The knowledge of

- how to deploy AI in a way that creates genuine value,

- which prompts work effectively,

- what contextual information AI requires to complete tasks reliably, and

- how AI outputs must ultimately be reviewed by people

resided entirely in my head and had to be translated and made available for the wider team.

This mirrors a pattern we encounter regularly in the organisations we advise: one person, often driven by personal motivation, builds AI competence. The scaling challenge, however, is only overcome when two aspects are brought into balance:

- “AI lone wolves” are not the solution in themselves. They merely shift the bottleneck. When one person on a team suddenly produces vastly more output with AI, all upstream and downstream functions must be able to respond accordingly. The relevant teams, and ideally the entire organisation, need to develop in step.

- A first use case provides a useful starting point, but it is insufficient to embed AI sustainably or extend it beyond a single, isolated application. The real lever lies in closing the Imagination Gap: systematically examining all processes across the organisation to identify the value-creating use cases where AI can achieve genuine leverage. This exercise simultaneously presents an opportunity to eliminate processes that contribute no real value.

The Section AI Proficiency Report from January 2026 underscores the relevance: 85 per cent of all knowledge workers see no value-creating AI use case in their daily work [5]. Not because the tools are absent, but because the imagination is lacking to see how AI could make a difference in their specific processes.

Why is workflow redesign the decisive lever?

McKinsey’s State of AI Report 2025 contains a central finding. Of 25 attributes examined, one has by far the greatest influence on whether an organisation achieves genuine economic value from AI: the willingness to fundamentally redesign workflows [2].

Not the choice of AI model. Not the size of the budget. Not the scale of the data estate or its quality. Rather, the question of whether an organisation was prepared to rethink and restructure its processes before deploying AI.

The study provides unambiguous evidence. The organisations McKinsey classifies as “high performers” differ from all others in one critical respect: they are three times more likely to have fundamentally redesigned their workflows [2].

This is where the shift in perspective becomes apparent. In our article on data quality and AI, we introduced a distinction we consider fundamental: faster versus further.

Accelerating (faster): “We have a process. Now we add AI.” This sounds reasonable and low-risk. In practice, it means existing workflows remain unchanged whilst AI is layered on as an accelerator. The customer service chatbot responds more quickly, but the underlying processes remain the same. The proposal team uses AI-generated text, but the approval process with its six signatures does not change. AI is reduced to accelerating the status quo.

Advancing (further): “We have an objective. How would we design this process if AI were part of it from the outset?” This demands more effort initially. It means questioning existing workflows, redefining responsibilities, and changing how people work. But it is the approach that genuinely moves the organisation forward, because it does not accelerate the status quo; it challenges the status quo itself.

Faster means accelerating existing processes with AI. Further means rethinking the processes themselves.

What characterises an AI-capable organisation?

Gartner describes a model comprising four interdependent layers: orchestration, architecture, enablement, and responsibility [6]. What stands out is that none of these four areas can be addressed by installing a new AI tool, because all four are fundamentally organisational tasks.

Orchestration: Which use cases do we prioritise? How do we decide whether to buy or build? What process do we use for piloting, and more importantly, for scaling?

Architecture: What is the state of our data? Is it usable for AI? How do we build a technical foundation that works beyond individual tools?

Enablement: Can our employees work effectively with AI? Do they understand where AI can support them and where it cannot? Does a mode of collaboration between people and AI exist that goes beyond “I type a prompt”?

Responsibility: Who decides where AI is deployed? How do we handle errors? How do we ensure that AI-assisted decisions remain transparent and accountable?

Translated into the reality of SMEs and family businesses: an organisation with 120 employees will not establish a dedicated team to operate and monitor AI models. Nor does it need to. But it must ask itself: are our processes documented in a way that enables AI to work with them? Do our senior leaders know how to evaluate AI outputs? Are there clear responsibilities governing which decisions AI may prepare and which it may not?

These are not technical questions. They are organisational ones. And this is precisely why so many AI projects fail: they are initiated as IT projects when they are, in fact, organisational transformation projects.

Where do SMEs and family businesses stand?

The data paints a divided picture. A study by the Karlsruhe University of Applied Sciences found that only 21 per cent of SMEs have a formal AI strategy. Among those already actively using AI, 64 per cent operate without any strategy whatsoever [7]. The different reference points only make the situation more striking: even among active AI adopters, a strategic approach remains the exception.

Bitkom’s 2026 study adds further context: 77 per cent of AI-using companies report an improved competitive position, and 52 per cent see a measurable contribution to business success [1]. This illustrates the divide: organisations that approach AI strategically achieve results and create value. Organisations that experiment generate effort above all. The IW Cologne finding that 62.7 per cent of surveyed companies cite the difficulty of assessing AI’s value as their greatest obstacle [8] confirms the point: where strategic embedding is absent, the visibility of value is absent too.

What does the path from experiment to AI organisation look like?

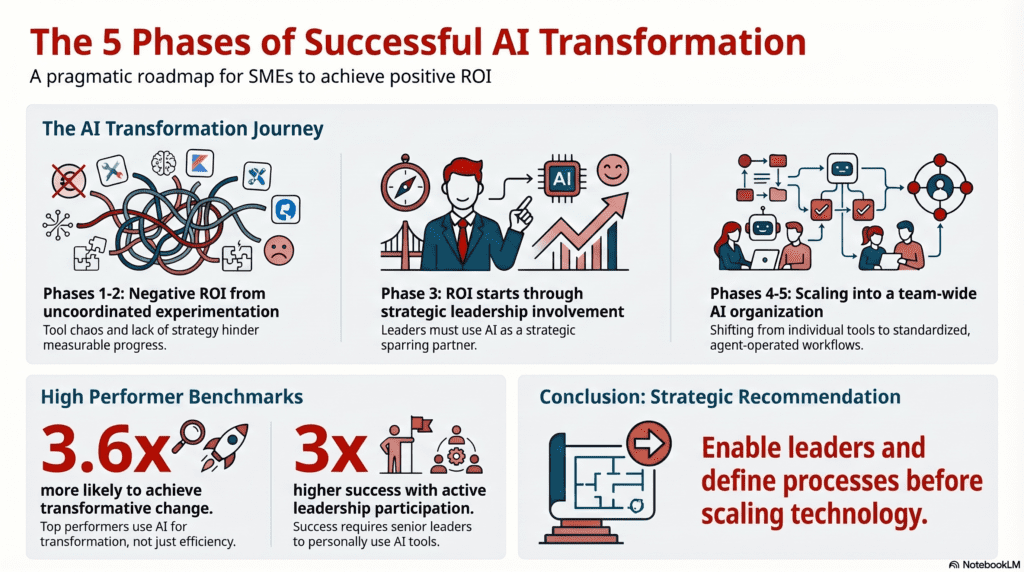

On our website, we describe this path as the AI Transformation Journey: five phases that organisations typically traverse, from unplanned experimentation to strategic AI maturity. The central insight is that AI readiness is not binary. An organisation is never simply “AI-ready” or “not ready.” It always occupies a position on a continuum of development, and each phase carries its own challenges, its own ROI profile, and its own change management requirements.

In Phase 1, the “Wild West,” various AI tools are tested without strategic direction. There is no clear success measurement and no coordination. The ROI in this phase is typically negative. Many organisations remain here, confusing activity with progress.

Phase 2, “Copilot purchased, problem not solved,” describes the moment when software licences are acquired but without change management or systematic introduction. Tools are used sporadically. The investment has been made; the returns remain modest.

The turning point arrives in Phase 3, when senior leaders begin to use AI not as a novelty but as a strategic sparring partner. The first systematic workflows emerge. AI is integrated into concrete decision-making processes. This is where the ROI begins to justify the investment.

In Phase 4, AI becomes a team capability. Shared workflows, standardised prompts, cross-functional AI strategies: this is the multiplayer mode, in which the organisation as a whole becomes AI-capable.

Phase 5, “human-led, agent-operated,” represents the target state of an AI-native organisation in which people focus on strategy and leadership whilst AI agents handle operational tasks.

What this phase logic makes clear: most organisations that launch AI projects and fail attempt to leap directly from Phase 1 to Phase 4 or 5. They purchase agent licences or build automations without having traversed Phases 2 and 3, without having enabled their senior leaders, documented their processes, or made their knowledge explicit. And this is precisely why scaling fails.

Here, the McKinsey findings and the reality of SMEs and family businesses converge. The high performers are not those with the most sophisticated AI tools. They are the organisations that have developed systematically, phase by phase. That have engaged their senior leaders. That have rethought their processes. That have treated AI not as an experiment but as a strategic capability. McKinsey’s analysis shows: high performers are 3.6 times more likely to deploy AI for transformative change, not merely for efficiency gains. And they are three times more likely to have senior leaders who actively champion and personally use AI [2].

What does this mean in practice?

For organisations at the beginning of the journey, it means this: the first step is not tool selection. The first step is an honest assessment. Where do our processes stand? What knowledge exists only in the minds of individual employees? Where does the greatest potential lie, not for AI deployment, but for value creation?

For organisations that have already gained AI experience and notice that results are not scaling as expected: the issue most likely does not lie with the technology. It lies with missing process documentation, with a leadership team that delegates AI rather than demonstrating its use, with a workforce that has no conception of how AI might change their day-to-day work.

In both cases, the next step does not begin with a software decision. It begins with an organisational one: do we want to treat AI as a tool, or build it as a capability?

Conclusion: the organisation is the product

The AI debate among SMEs and family businesses frequently revolves around the wrong question. The starting point should not be “Which AI tool do we need?” but rather: “What kind of organisation do we need to become in order to use AI meaningfully?”

The answer does not require million-pound budgets or a dedicated AI department. It requires the willingness to document processes, make knowledge explicit, develop senior leaders, and rethink workflows. Not everything at once. Not perfectly. But systematically.

The organisations that will derive the greatest value from AI in the coming years are not those with the most AI tools. They are those that have stopped running AI experiments and started building AI organisations.

References

[3] Gartner: „Lack of AI-Ready Data Puts AI Projects at Risk“, Pressemitteilung, Februar 2025.

[6] Gartner: „10 Best Practices for Scaling GenAI“ (2025) und „The AI Future of Leadership and Management“ (2026). Grafiken veröffentlicht über Gartner IT auf LinkedIn. Vollständige Studien verfügbar über Gartner-Abonnement.

[7] Hochschule Karlsruhe: Untersuchung zur KI-Nutzung im deutschen Mittelstand, 2025. Zitiert in: EvarLink, „KI im Mittelstand: Praktische Einsatzfelder jenseits des Hypes“.

Would you like to strategically implement AI in your daily business operations?

The question is: What does this look like in your company? Do your employees have the competencies they need for this new work reality? Or are AI integration and competency development currently happening side by side – without systematic connection?

In a no-obligation strategy session, we would be happy to introduce you to the NordAGI approach.