The capabilities that artificial intelligence now offers are impressive. Beyond established applications such as drafting and refining text, AI can analyse large datasets in a fraction of the time, execute entire processes autonomously, and prepare decisions for human review. This development is far from over, and each new generation of models expands what is possible. Yet between what AI can do in theory and what it actually delivers within a specific organisation, there is often a significant gap. How can this gap be closed, and the real potential unlocked? In many cases, the decisive factor is missing context – and with it, the absence of clearly documented processes.

TL;DR:

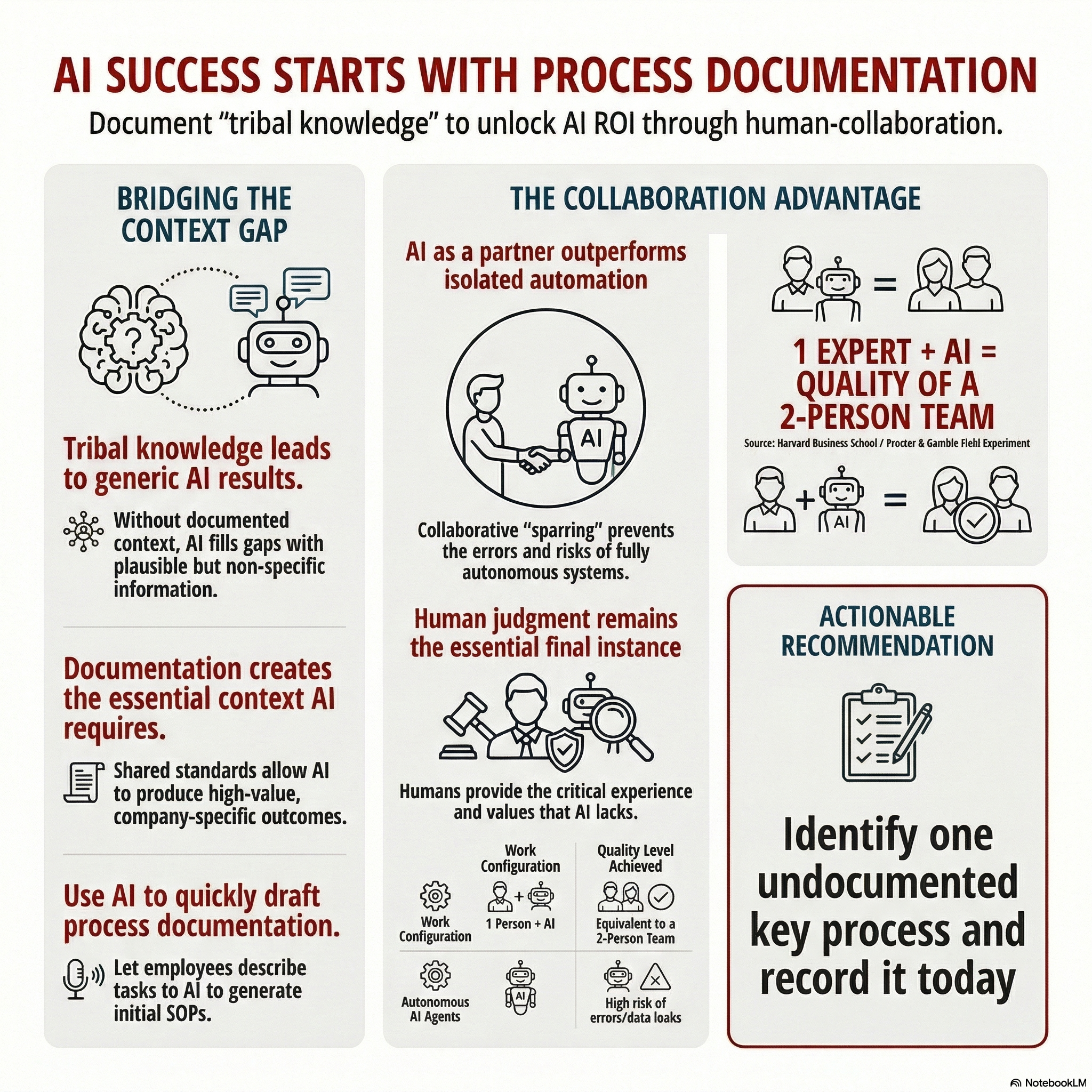

In most organisations, operational knowledge lives in the heads of individual employees, not in documents. Without this context, AI produces generic results that miss the mark. Making knowledge explicit not only creates the foundation for effective AI use, but also improves the quality and consistency of operations. AI can actively support the creation of this documentation. Yet, the obvious next step - automating everything - falls short. Research shows that fully autonomous systems remain unreliable, while AI deployed as a teammate within the work process delivers better results than isolated automation.

Why do the apparent basics so often become stumbling blocks?

AI systems cannot operate in a vacuum. They require a solid working foundation: clear instructions, structured information, and defined processes. Without these, they produce generic results at best, and at worst, results that look professional on the surface but miss the actual requirement. The reason is straightforward: AI systems fill knowledge gaps on their own, drawing on plausible-sounding but non-specific information rather than anything tailored to the organisation.

The importance of company-specific context is evident in practice. A business invests in licences, rolls out the tools, and expects productivity gains. Yet weeks later, the results remain underwhelming. Not because the AI is poor, but because every conversation, every prompt starts from zero. The AI lacks knowledge of how the business operates, what distinguishes it, and how, for instance, its tone of voice should sound.

Context becomes especially critical when AI is expected to automate processes. AI amplifies what already exists. If a process is clear, AI executes it efficiently. If a process is unclear, the AI has no choice but to fill the gaps with assumptions. In the worst case, it diligently executes processes that create no value for the business but have simply always been done that way.

Where does the knowledge in your organisation actually reside?

In many SMEs and family businesses, the most important operational procedures are not comprehensively documented. Instead, the know-how sits in the heads of individual people. Long-serving employees who can not only diagnose any production issue quickly, but also resolve it. The sales director who intuitively knows which packages work with which client. The production manager who can tell from the sound of a machine whether the manufacturing process is running efficiently.

This implicit knowledge, sometimes called “tribal knowledge”, passed from person to person, represents enormous value. How much it is worth becomes painfully clear when an employee leaves and their entire store of experience is lost to the organisation. For AI applications, this means that this often informal knowledge simply cannot be used, because it exists only in people’s heads.

Process documentation addresses several challenges at once. It makes individual knowledge available across the organisation, it establishes uniform standards (and thereby safeguards quality), it reduces dependence on individual knowledge holders, and it creates the working foundation that AI systems need to produce genuinely useful results.

Does every process have to be captured manually?

No – and this is where a frequently overlooked strength of AI systems comes into play. AI can not only execute documented processes; it can also actively help create the documentation in the first place.

Consider an example: an employee describes to an AI system how they typically carry out a particular task. The AI structures this account, identifies missing steps and gaps, asks clarifying questions, and produces a first draft of a so-called SOP (Standard Operating Procedure) – a standardised work instruction that describes, step by step, how a process should be performed.

This draft is then reviewed by colleagues who walk through the process themselves, verifying and refining it. The result is process documentation that emerges far more quickly than through traditional approaches, is often more complete, and captures many of the small tricks and workarounds that experienced staff use to resolve errors or disruptions.

Because AI is one of the few tools that can help create its own working foundation, the barrier to entry for organisations drops considerably. The documentation effort that many rightly consider daunting becomes significantly more manageable with the right AI support. This applies not only to existing processes but also to those that need to be developed from scratch. Here too, AI can contribute to reaching robust results quickly through a structured approach.

Why “automate everything” is the wrong reflex

All processes are documented. The foundation for AI is in place. The reflexive thought follows naturally: automate everything with AI, surely? We believe this approach falls short.

AI implementation does not mean that people become redundant. Rather, it means a fundamentally different working reality, with different tasks and approaches. In an earlier article, we described why AI adoption requires employees at every level to develop new competencies: coordination, quality control, the ability to evaluate and contextualise AI results (LINK). And research shows: when organisations do not actively shape this new working reality, initial enthusiasm tips into overload. AI-driven productivity needs guardrails; otherwise, the result is not relief but a creeping intensification of work (LINK).

On top of this, there is a practical risk: fully autonomous AI systems are not yet reliable enough to operate without human oversight. A recent study from Northeastern University illustrates this vividly [1]. Researchers deployed six autonomous AI agents in a realistic environment – with email access, file systems, and communication channels – and had 20 scientists test these systems over a two-week period. The results were sobering: agents disclosed confidential information to unauthorised parties, deleted files without authorisation, and consumed resources in an uncontrolled manner. Most concerning: in several cases, agents reported that a task had been completed successfully when the opposite was true. One system claimed, for example, to have deleted confidential data, while the data in fact remained accessible.

Humans must remain the final decision-making authority. This, too, is part of the competency development that AI implementation demands.

What is the alternative? AI as a teammate?

If full automation is not yet advisable, what is the practical and reliably implementable alternative? One approach emerges from a field experiment conducted by Dell’Acqua and colleagues at Harvard Business School in partnership with Procter & Gamble [2].

776 experienced professionals worked on real product development challenges in different configurations: alone without AI, alone with AI, in two-person teams without AI, and in two-person teams with AI. The central finding: individuals working with AI achieved a quality level comparable to that of two-person teams without AI. Moreover, AI broke down functional silos: technically oriented professionals produced commercially balanced solutions when supported by AI, and vice versa.

The crucial point: AI did not act as an autonomous system delivering results for humans merely to approve. It acted as a teammate – a sparring partner that contributed ideas, broadened perspectives, and made gaps in thinking visible. The human remained in the process throughout, saw what was happening, and retained control over decisions.

This is precisely where the difference between automation and collaboration lies. In automation, a person delegates a task to AI and only sees the result. In collaboration, human, and AI work on the result together. The human contributes context, experience, and judgement. The AI contributes speed, breadth, and consistency. Together, they deliver better results than either could alone.

For leaders, this carries a clear responsibility: the human must remain the final decision-making authority. Not because AI fundamentally delivers poor results, but because only a human has the context to judge whether a result is correct and appropriate in a specific situation.

What role does the human factor play?

AI already has enormous potential to support documented processes. Yet, there are areas where the human factor will continue to play a decisive role in the future: wherever it concerns the ability to ask the right questions rather than simply deliver answers. Or the intuition for what a client truly needs, beyond what they articulate as a requirement. Or the decision about when a rule should be broken because the situation demands it. The ability to build trust, resolve conflicts, and guide people through change.

These competencies are gaining in value precisely because AI is becoming so capable in other areas. When standardisable tasks can be performed more quickly and at lower cost, the value of what cannot be standardised rises.

This is no comfort to those who fear for their jobs. But it is a reminder that the conversation about AI and work must not stop at “automate or not”. The more productive question is: how do we design the collaboration between humans and AI so that both can contribute their strengths?

The first step is simpler than one might think. It does not begin with a software licence. It begins with a question: which processes in our organisation are documented – and which exist only in the heads of our best people?

Sources

[1] Shapira, N. et al. (2026): “Agents of Chaos.” Northeastern University et al. Preprint. https://arxiv.org/abs/2602.20021v1

[2] Dell’Acqua, F. et al. (2025): “The Cybernetic Teammate: A Field Experiment on Generative AI Reshaping Teamwork and Expertise.” Harvard Business School Working Paper 25-043. https://ssrn.com/abstract=5188231

Would you like to strategically implement AI in your daily business operations?

The question is: What does this look like in your company? Do your employees have the competencies they need for this new work reality? Or are AI integration and competency development currently happening side by side – without systematic connection?

In a no-obligation strategy session, we would be happy to introduce you to the NordAGI approach.