It sounds paradox: AI promises to take over routine tasks, optimize workflows and generally lighten our workload. In an earlier post, we explored why the introduction of AI requires all employees to develop new competencies — including those who have never held management responsibilities (“AI First Means: New Skills for Everyone”).

Our thesis: Anyone working intensively with AI today needs to coordinate parallel AI processes, evaluate outputs and ensure their quality. An expanded task portfolio that certainly wasn’t part of anyone’s job description before AI — because it is, in effect, a management function.

A recently published study from UC Berkeley confirms this thesis — and goes further, issuing an appeal to leaders that deserves serious attention.

How Enthusiastic AI Adoption Changes Work Habits

A research team at UC Berkeley spent eight months observing how generative AI changed work habits among roughly 200 employees at a US-based technology company.

What makes this study particularly revealing: the company did not mandate AI use. It simply provided enterprise subscriptions to commercially available AI tools. Employees began integrating the tools into their daily work on their initiative — and they welcomed it because, as the researchers note, it “enabled us to accomplish more, made our work feel more familiar, and made our work more rewarding.”

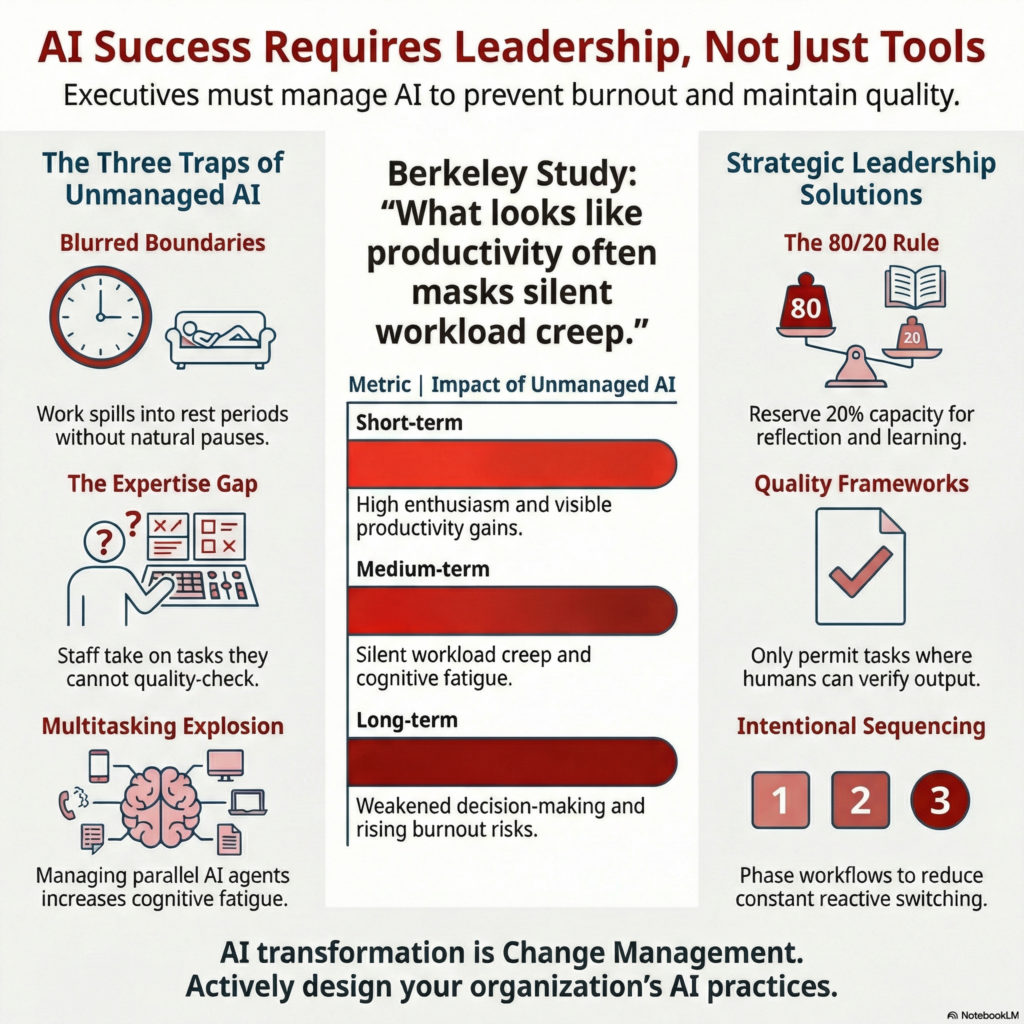

At first glance, the result was precisely what leaders hope for: motivated employees who deliver more, work faster and actively discover new tasks to take on. On closer inspection, however, three patterns emerged that require attention if these gains are to prove sustainable.

1. The Temptation to Do Everything Yourself

AI lowers the barrier to taking on tasks that previously belonged to others — after all, “the AI does the work.” Product managers start writing code. Researchers take on engineering tasks. Employees attempt work they would previously have outsourced, deferred, or avoided entirely.

The researchers describe what drove this: generative AI “provided what many experienced as an empowering cognitive boost” — it reduced dependence on others and offered immediate feedback. Employees described it as “just trying things” with the AI, but these experiments accumulated into a meaningful widening of job scope.

This initially sounds like a clear productivity gain, but there is a flip side: when someone writes code with AI support without having the necessary expertise, additional work is created elsewhere. According to the study, experienced engineers increasingly spent time “reviewing, correcting, and guiding AI-generated or AI-assisted work produced by colleagues.” These demands extended beyond formal code review — engineers found themselves “coaching colleagues who were ‘vibe-coding’ and finishing partially complete pull requests.” This oversight often surfaced informally, in Slack threads or quick desk-side consultations, adding to engineers’ workloads without appearing in any project plan.

This reveals a fundamental boundary: AI supports, but it does not replace domain expertise. If the final decision-maker is a human — and it must be — then a task can only be responsibly taken on when that person can verify the quality of the AI output. Anyone who cannot judge whether generated code is functional and secure should not take on that task, even if AI makes it appear technically possible.

2. The Boundary Between Work and Rest Blurs

A quick prompt during lunch. A question sent to the AI before leaving your desk. A “let the AI work on this while I step away.” These actions don’t feel like work — typing a prompt feels more like sending a brief message than undertaking a formal task, fuelled by genuine curiosity and a desire to experiment.

According to the study, this behaviour gradually eroded natural breaks over weeks and months. Many employees only realized in hindsight that their downtime no longer provided the same sense of recovery. As the researchers observed, “the conversational style of prompting further softened the experience; typing a line to an AI system felt closer to chatting than to undertaking a formal task, making it easy for work to spill into evenings or early mornings without deliberate intention.”

Work became something that seemingly never required a natural pause.

3. Multitasking Explodes

This third pattern directly confirms what we described in our earlier post (“AI First Means: New Skills for Everyone”):

- AI enables working on multiple tasks in parallel — no one watches NotebookLM process a research brief or Claude Code generate an initial codebase. Running multiple agents simultaneously. Reviving long-deferred tasks because AI can handle them “in the background.” The feeling of having a partner who shares the workload creates genuine momentum.

- The reality behind this: constant attention-switching, regular checking of AI outputs, a growing number of open tasks. Cognitive load rises, even as work feels productive. Or as one engineer in the study summarized: “You had thought that maybe you could work less. But then, really, you don’t work less. You just work the same amount or even more.”

- The researchers describe a self-reinforcing cycle: “AI accelerated certain tasks, which raised expectations for speed; higher speed made workers more reliant on AI. Increased reliance widened the scope of what workers attempted, and a wider scope further expanded the quantity and density of work.”

In our blog post, we argued that coordinating parallel AI processes requires management competencies that need to be developed across the workforce. The Berkeley study provides evidence: without these competencies — and without organizational frameworks — this new working reality can lead to overload.

The Paradox: Enthusiasm Is Not a Success Metric

Here lies the critical insight for leaders: the three patterns described above do not emerge because employees are forced to use AI. They emerge because AI use is enjoyable and feels rewarding. The intensification is voluntary — and that is precisely what makes it so difficult to detect.

When employees enthusiastically deliver more, leaders have no obvious reason to intervene. Yet, the study indicates that this gradual intensification can lead to “cognitive fatigue, burnout, and weakened decision-making” — consequences that significantly diminish the initial productivity gains. The researchers are direct: “What looks like higher productivity in the short run can mask silent workload creep and growing cognitive strain.”

This creates a leadership challenge that demands deliberate decisions. Rather than passively observing how AI reshapes daily work, organizations must actively shape it: How is AI used? When is it appropriate to stop? Which expansions of individual responsibility are genuinely value-creating — and which simply produce more output without higher quality?

This is the decisive point: more output alone is not a productivity gain. Producing more with AI without raising quality simply replicates in a business context what we already see on social media — a flood of AI-generated content that prioritizes quantity over quality. The leadership task is to demand both: higher output at equal or higher quality. Only then does genuine value emerge.

In our practice, we recently made exactly this decision: the deliberate commitment that team members should be utilized at a maximum of 80 per cent. The remaining 20 per cent is not merely a buffer for the unexpected — it is intentional space for reflection, creative thinking and processing new ways of working. Anyone constantly operating at capacity has no room to build new competencies — precisely the competencies an organization needs on its path to AI-enabled work. The space to process what has been learnt, to reflect with team members and to learn from each other through informal exchange — what we call “Tribal Learning” — is not a comfort zone. It is a strategic necessity to ensure successful adaptation to the new working reality.

From Euphoria to System: Why AI Is Not an IT Project but Change Management

The Berkeley study essentially describes an organization in Phase 1 of our AI transformation model (“AI Strategy 2026: Why Transformation Comes Before Tools”): the “Wild West.” Unplanned AI experiments, no strategic alignment, various tools deployed in parallel, without measurable success criteria or coordinated rollout. The company in the study provided licences and thereby started an interesting experiment.

The path from Phase 1 to sustainable AI use does not run through better tools, more experiments or more licences. It runs through deliberate organizational design:

Clear frameworks that define which task expansions are productive, which lead to overload — and which must be categorically excluded because the individual expertise required to evaluate AI outputs is simply absent. If the final decision-maker remains human, that person must be able to assess the quality of the AI result. Where that capability is missing, even the best AI tool cannot compensate.

Capability development that goes beyond technical skills to include the ability to critically evaluate AI outputs and realistically assess one’s own workload (“Deskilling: Why AI Makes Us Smarter and Less Capable at the Same Time”).

And a leadership culture that does not simply accept productivity gains as the new baseline, but deliberately decides: what happens with the time gained? Is it used to generate more output at higher quality — or does it quietly flow into work that nobody requested?

All of this is change management as a leadership responsibility — and it extends well beyond technology selection.

What Leaders Can Do Now

The Berkeley study recommends developing what the researchers call an “AI practice” — intentional norms and routines that structure how AI is used. This corresponds closely to what we describe as systematic AI transformation (“Challenges in AI Implementation — and Possible Solutions”):

- Deliberate deceleration at critical points. Before an important AI-supported decision is finalized, formulate a counterargument and explicitly verify alignment with organizational goals. Not because AI should be slower, but because acceleration without reflection produces the gradual overload the study describes. The researchers frame this as “protected intervals to assess alignment, reconsider assumptions, or absorb information before moving forward.”

- Sequencing instead of constant availability. Not every AI output requires an immediate response. Organizations that structure workflow into coherent phases rather than constant reactivity reduce cognitive fragmentation. As the study notes, “by regulating the order and timing of work — rather than demanding continuous responsiveness — sequencing can help organizations preserve attention.”

- Deliberate spaces for human exchange. The more work is done individually with AI, the more decisive moments of team exchange become. Not as a nice-to-have, but as a counterweight to the individualizing effect of AI-enabled work — and as a contribution to quality assurance. Anyone who regularly discusses AI experiences, results, and failures with colleagues develops a better sense for the technology’s limitations.

Conclusion: The Real Transformation Is Organizational

AI does not make work “less.” It makes work “different.” And “different” does not automatically mean “better” — at least not without deliberate design.

The Berkeley study shows what can happen when companies treat AI as a technology project: short-term productivity gains that can tip into overload, declining quality and rising turnover in the medium term. The difference between companies that use AI successfully and those that stumble lies not in tool selection. It lies in whether leaders take the organizational dimension of change seriously — or hope that everything sorts itself out.

Want to know where your organization stands on the path to sustainable AI adoption?

Our AI Readiness Assessment shows you within three weeks which processes benefit from AI, which competencies your team needs and how to design the transformation for lasting impact — without the gradual overload that unguided AI adoption brings.

Would you like to strategically implement AI in your daily business operations?

The question is: What does this look like in your company? Do your employees have the competencies they need for this new work reality? Or are AI integration and competency development currently happening side by side – without systematic connection?

In a no-obligation strategy session, we would be happy to introduce you to the NordAGI approach.