Why “AI is a leadership topic” only becomes true when business leaders practise what they preach: In LinkedIn posts, keynote speeches, and consulting pitches, one phrase has established itself firmly: “AI is a leadership topic.” The response is overwhelmingly one of agreement. Everyone nods. Nobody objects. And the statement is not wrong, either. But it is rarely as straightforward as it sounds.

One reason: the phrase leaves unanswered which consequences business leaders should draw from this insight. Which decisions follow? Which behaviours should be encouraged, and which ones avoided? And what happens when someone declares AI a priority but has yet to develop any first-hand AI competence of their own?

TL;DR:

“AI is a leadership topic” has become a consensus statement, yet it is rarely thought through to its logical conclusion. Three recent studies demonstrate that AI usage changes the competencies of those who use it. Those who treat AI as a shortcut lose skills. Those who treat it as a sparring partner — asking questions, critically examining outputs, and maintaining their own cognitive effort - become measurably more competent.

For business leaders, this has two implications: first, they need their own AI competence, not least to recognise which usage patterns within their organisation are productive and which are not. Second, they must lead by example rather than by decree. “AI is a leadership topic” only becomes concrete when senior leaders consciously set the direction.

“AI is a leadership topic” — what follows from that?

Three recent studies offer surprisingly concrete answers to these questions, each from a distinctly different angle:

- A controlled experiment with 52 experienced software developers shows that AI usage can either strengthen or weaken competencies, depending on how AI is used [1].

- An eight-month observational study within a technology company documents that AI can increase workload rather than reduce it, when nobody steers the dynamics [2].

- A survey of more than 80,000 people across 159 countries confirms that those who use AI as a sparring partner report measurable benefits [3].

What connects these three perspectives: AI usage is not neutral. It changes the competencies of those who use it. The direction of that change depends decisively on how AI is used. And this is precisely where the statement “AI is a leadership topic” gains real substance.

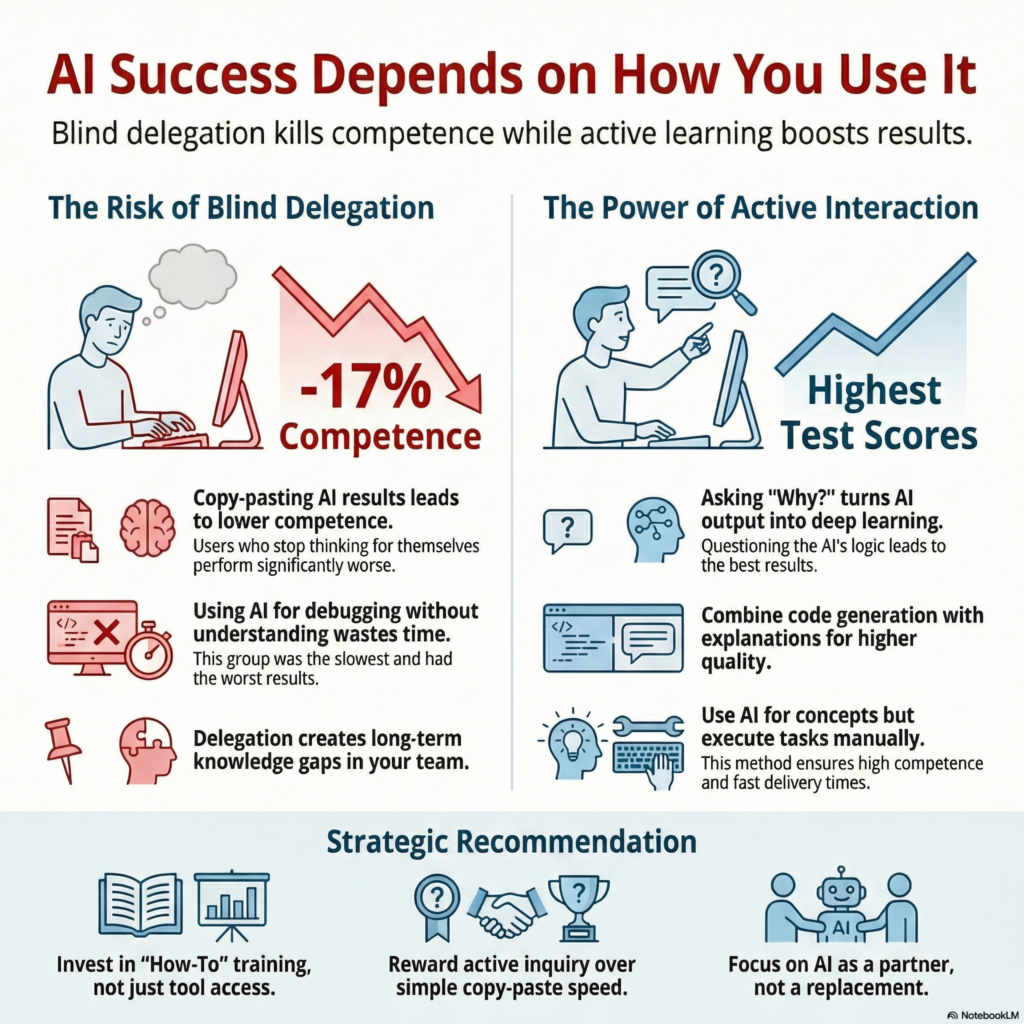

The most important finding: the participants with the best results used AI just as intensively as those with the worst results. The difference lay not in whether or how much AI was used, but in the manner of its use.

What the research shows: Two paths, one tool

In early 2026, Judy Hanwen Shen and Alex Tamkin published a study [1] examining how AI usage affects the acquisition of new skills. The experimental design was straightforward: 52 experienced software developers were asked to complete tasks using an unfamiliar programming library. One half had access to an AI assistant, the other did not. Afterwards, a competency test assessed whether participants had genuinely understood the new concepts, covering conceptual understanding, code reading, and debugging.

The results appear counterintuitive:

- The AI group scored 17 per cent lower on the competency test than the comparison group.

- The AI group was, on average, not faster either. In fact, they were slower than the comparison group, which had no AI access at all.

In short: in this experiment, AI usage neither increased productivity nor promoted learning. Does the study confirm what AI sceptics have long claimed? No, because there is a further layer to the findings, and it paints a considerably more nuanced picture.

The researchers identified six typical interaction patterns:

- Half of these patterns led to measurable competency loss.

- The other half maintained or increased the ability to learn.

The most important finding: the participants with the best results used AI just as intensively as those with the worst results. The difference lay not in whether or how much AI was used, but in the manner of its use.

Which patterns negatively impacted competency?

Blindly delegating tasks and troubleshooting does not produce good outcomes:

- Some participants delegated the entire task to the AI, copied the generated code, and submitted it as their result. They were the fastest, but scored poorly on the competency test.

- Others began with their attempts, but progressively shifted the work to the AI. By the second part of the task, they had stopped thinking for themselves. Their test results were equally unconvincing.

- A third group used AI repeatedly to find errors, without ever understanding why the code was not working. They took the longest and achieved the weakest result of all participants.

Which behaviour patterns had a positive effect on competency?

Asking questions, actively engaging with AI, and learning in the process:

- The most successful group did allow AI to generate code, but then asked targeted comprehension questions: “What does this code do? Why does it work this way?” They achieved the highest result in the test.

- A second group formulated questions that combined code generation with explanation from the outset. Their result was the second highest in the study.

- The third successful group asked exclusively conceptual questions and implemented the code themselves. They achieved strong results with the second shortest completion time.

The central insight: those who thought along, learned. Those who merely delegated, gained no competence and became increasingly dependent on the AI. The problem is not AI itself, but the abdication of cognitive effort. Or, as the researchers summarise it: the most successful participants remained cognitively in the driver’s seat. They used AI as a sparring partner, not as a crib sheet.

For business leaders, this carries an immediate consequence: without active oversight, there is a risk that a few high performers become ever more competent through AI, whilst another part of the team gradually loses capability. This divergence is not caused by the technology itself, but by the failure to recognise different usage patterns and respond to them appropriately.

An important caveat: the study comprises 52 participants in a specific context. Direct transferability to other professional domains has not been empirically established. The underlying mechanism, however – that delegating tasks without understanding the process does not build competence – is well grounded in learning psychology and is by no means confined to software development.

What this means for business leaders

AI without context – business leaders without AI competence

It is an experience familiar to many who work with AI: AI without context delivers generic results. An instruction similar to “Produce a competitive analysis” yields a document that reads professionally but could belong to any organisation. Providing AI with strategic context, asking the right questions, and critically examining the answers produces something considerably more valuable. We explore this further in our blog post.

The same pattern applies to business leaders seeking to initiate an AI transformation within their organisation. Deep technical knowledge or programming experience is certainly not required. But AI competence at leadership level enables senior leaders to not only initiate the transformation process but also to accompany it critically because the fundamental mechanisms and their potential effects are understood.

What happens when nobody steers

A UC Berkeley study [2] provides a concrete example of what occurs when AI is deployed within an organisation and the leadership team fails to recognise the emerging dynamics early enough. The researchers observed a technology company over eight months and documented three developments:

- Employees spontaneously took on tasks from other departments because AI appeared to make this easy.

- The boundaries between work and personal time blurred, as AI interactions did not feel like work – not even during leisure hours.

- Cognitive load increased, as employees orchestrated multiple AI processes in parallel.

The outcome was not efficiency but exhaustion. Not because the AI performed poorly, but because nobody managed the dynamics.

The effects of AI on competency development and workload within teams often remain invisible until the symptoms become impossible to ignore. The studies paint a fragmented picture: some employees become more productive and more competent, others stagnate, and still others lose capabilities. Recognising these differences early requires an understanding of the mechanisms behind them.

AI as a sparring partner: The other side of the coin

Despite what the preceding sections might suggest, the impression that AI is primarily a risk is misleading. The opposite is the case, and the data support this as well.

To recall: the most successful participants in the Shen-Tamkin study achieved the best result on the competency test, and they used AI extensively. They learned not in spite of AI, but with AI. They did not treat the AI as a servant delivering ready-made answers, but as a sparring partner that explains, contextualises, tests assumptions, and identifies gaps in understanding.

This mode works not only in a laboratory setting but also in practice. The Anthropic study, surveying over 80,000 respondents across 159 countries [3], demonstrates this clearly. Cognitive partnership – meaning AI as a sparring partner for thinking and problem-solving – was among the most frequently cited concrete benefits. Many respondents also named learning and competency development as realised value.

For business leaders, this means that AI as a strategic sparring partner is not a future scenario. People already use AI in this capacity today and report measurable value. A sparring partner that challenges positioning. That surfaces counterarguments. That reveals blind spots in strategy development. Not as a replacement for one’s own thinking, but as a catalyst for it.

And this is precisely where the circle closes. From our perspective, “AI is a leadership topic” becomes concrete when business leaders do two things:

- Use AI as a sparring partner themselves and, in doing so, learn what works and what does not.

- Lead by example and establish this approach to AI usage across the organisation.

Those who do both not only deploy AI productively. They also create a culture in which employees come to see AI as a tool for learning, rather than a shortcut for switching off.

Talk is easy …

To be transparent: this article was produced in collaboration with an AI. But not by instructing it to “find a few studies about AI and write a blog post for our website”. Had we taken that approach, the resulting text would likely have sounded alarmist, warned dramatically about impending competency loss, listed three recommendations for action, and concluded with an even more dramatic appeal.

Instead, we read the studies, compared the core findings against existing insights and assessments, and then discussed them with the AI in sparring partner mode. Not once, but across multiple iterations. The AI did not simply nod and confirm. It pushed back and surfaced additional perspectives it could draw upon because

- our AI is configured to tell us what we need to hear, not what we want to hear, and

- we have provided it with the necessary context as a knowledge base, enabling it to incorporate a range of perspectives that might otherwise have gone unrecognised.

The dialogue, and at times the genuine wrestling, between human judgement and machine intelligence is not a theoretical concept. It is our daily working reality.

What we have learned from working with AI: our AI systems are configured to be explicitly not yes-men. When a draft is unbalanced, the AI flags it. When an argument has gaps, it names them. When a tone drifts towards alarmism, it intervenes. This can be uncomfortable at times. But it produces better results than the alternative: an AI that agrees with everything, and thereby promotes exactly the competency loss that the research describes.

What this means for AI adoption in SMEs and family businesses

Three insights from the research and from our practice:

- AI competence begins with the business leader. Not as IT-level expertise, but as first-hand experience of how AI operates, where it helps, and where it misleads. Those who have never used AI as a sparring partner cannot assess whether their team is using AI productively or becoming dependent on it.

- The mode determines the outcome. The same AI can build competencies or erode them. The study reveals clear differences between sparring partner usage and pure delegation. Recognising this distinction and embedding it within the organisation is a leadership task. It can only be fulfilled by those who know both modes from their experience.

- Leading by example is harder, but more effective in the long run, than issuing directives. When business leaders use AI as a strategic tool and communicate this openly, a different organisational culture emerges. When AI is merely “recommended” via email, success is doubtful. And the practice of leading by example also builds sensitivity for dynamics that may look like efficiency but end in overwork.

Sources

Would you like to strategically implement AI in your daily business operations?

If you would like to explore where your organisation stands in terms of AI competence and how to establish a productive approach to AI, let’s have a conversation. Our AI Leadership Readiness Assessment is designed as a structured starting point for precisely this purpose.

In a no-obligation strategy session, we would be happy to introduce you to the NordAGI approach.